AWS online Dev Day

Wednesday April 8th, AWS DEV DAY ONLINE!

Awesome. As usual I wanted to follow all sessions. Also, as usual I did not have time for that. I joined and summarized:

- CI/CD for Serverless Applications by Marcia Villalba

- Infrastructure as Code Deep Dive by Darko Mesaroš

- End-to-End Observability to Better Understand Your Serverless Apps by Danilo Poccia

CI/CD for Serverless Applications

Codepipeline, Codebuild, Codedeploy and cloudformation

You want 1 artifact, immutable, deployed to multiple environments and multiple accounts.

AWS Codepipeline can help you orchestrate such a workflow on AWS.

Codepipeline:

- integrates with third party tools like Github

- a pipeline existing from multiple stages

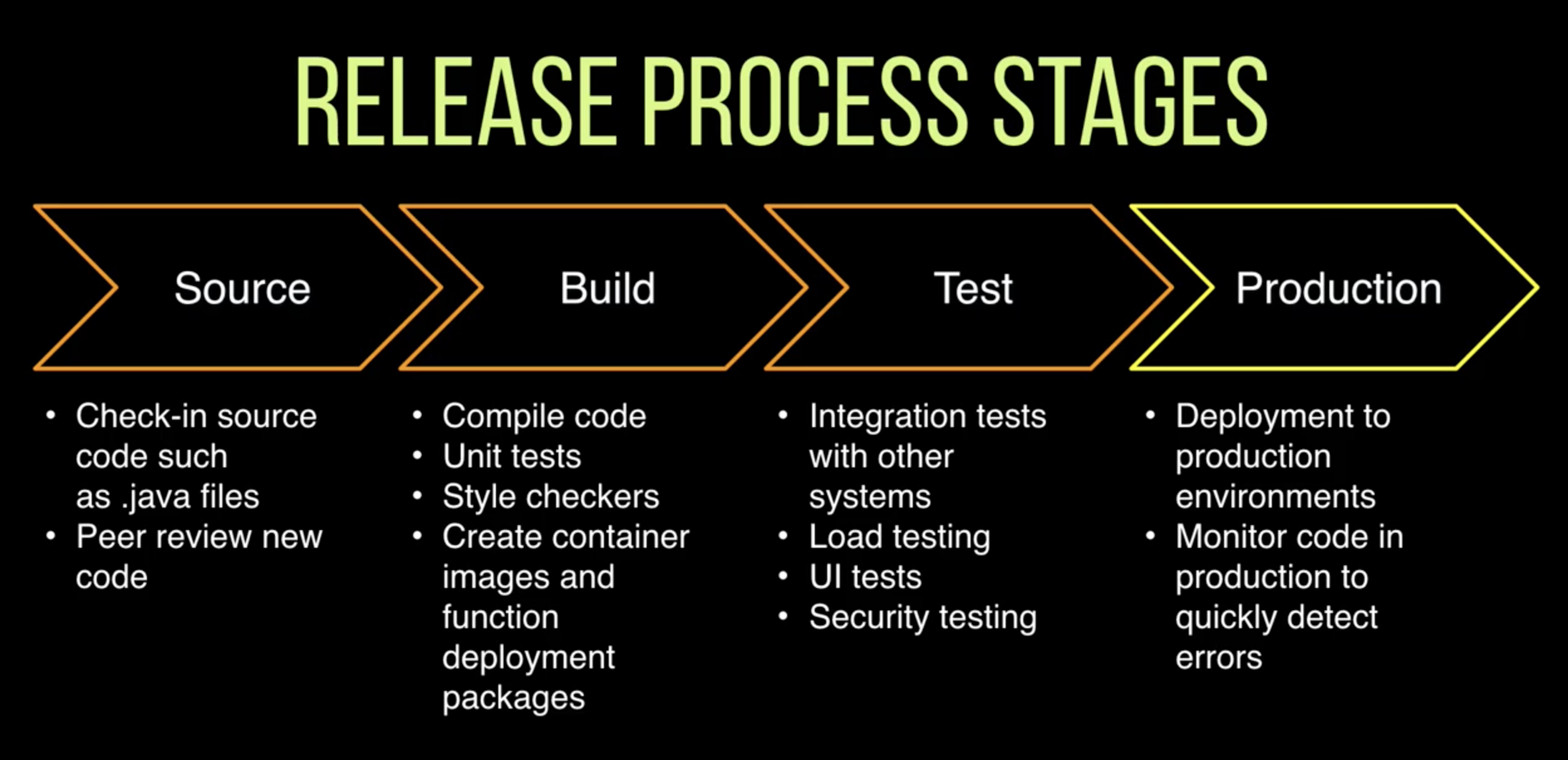

In Codepipeline you can define certain stages that your pipeline consist of.

A stage can for example be "DeployToStaging".

This stage then contains multiple actions, for example: "CreateChangeSet" and "ExecuteChangeSet".

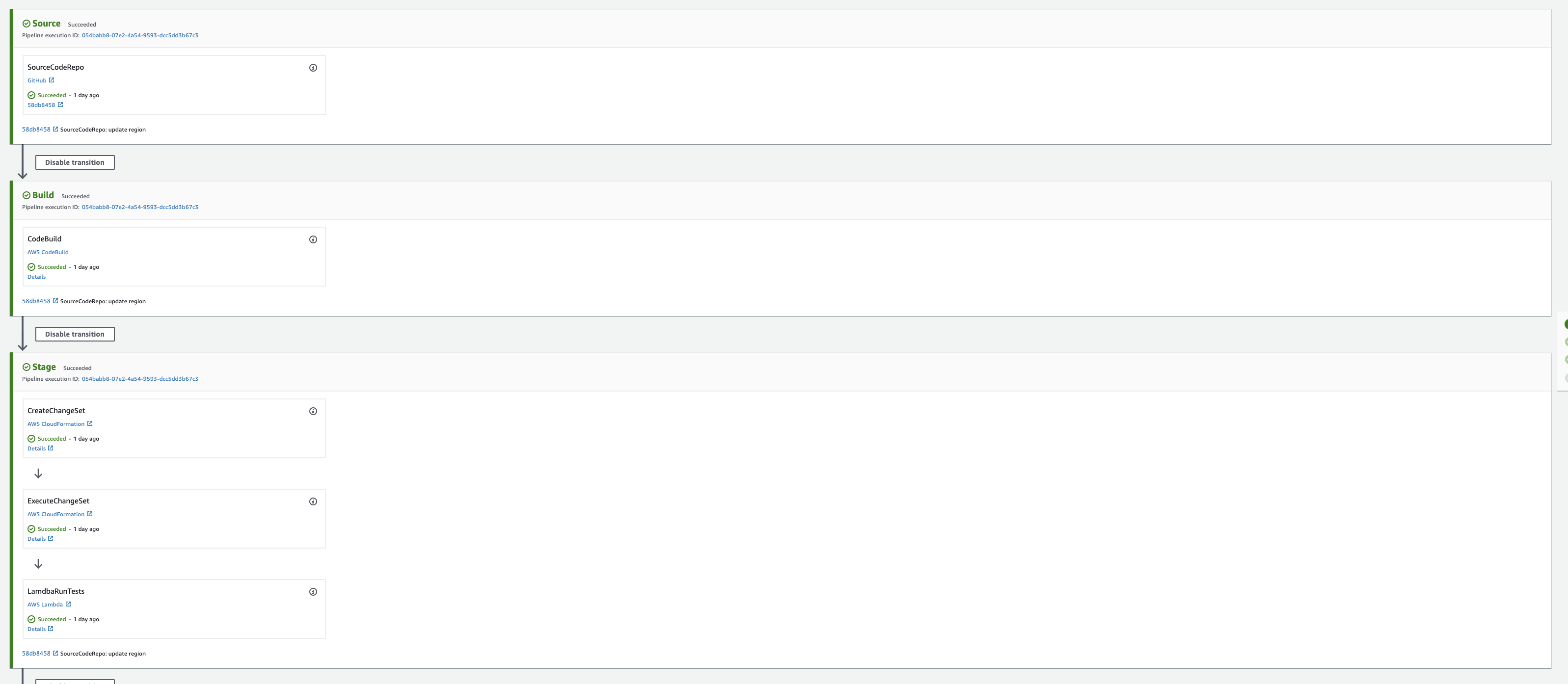

Here you see an example of such a pipeline:

Codepipeline Codebuild Codedeploy

These terms are often confused.

- Codepipeline is what orchestrates the steps in your pipeline.

-

Codebuild is about creating an artifact which will then later be deployed to the cloud environment

- For example Codebuild spins up a runtime with Java installed, compiles the code, packages the output Jar together with the Cloudformation template and stores this artifact on S3

- It then passes along the reference to the artifact to the next step of your pipeline

-

Codedeploy is about specifying how you want the artifact to be deployed. For example using a canary release strategy

- You don't have to use Codedeploy to deploy your artifact. You can just use Cloudformation to deploy if you do not need a specific deployment strategy. This is also what is used in the example project: https://github.com/mavi888/demo-cicd-codepipeline

Pipeline as code

You can create a pipeline as Infrastructe as Code.

Which is probably what you want

Though I advice you not to write a pipeline as IaC from scratch yourself.

Look at an example and modify it.

I speak from experience when I say it can be quite cumbersome to build it up from scratch.

In this project you can find a great example from Marcia: https://github.com/mavi888/demo-cicd-codepipeline

It was very interesting to see how you can also run integration tests and then return the results of those tests to your pipeline.

An example can also found in the github repo I linked above.

Fancy deployments

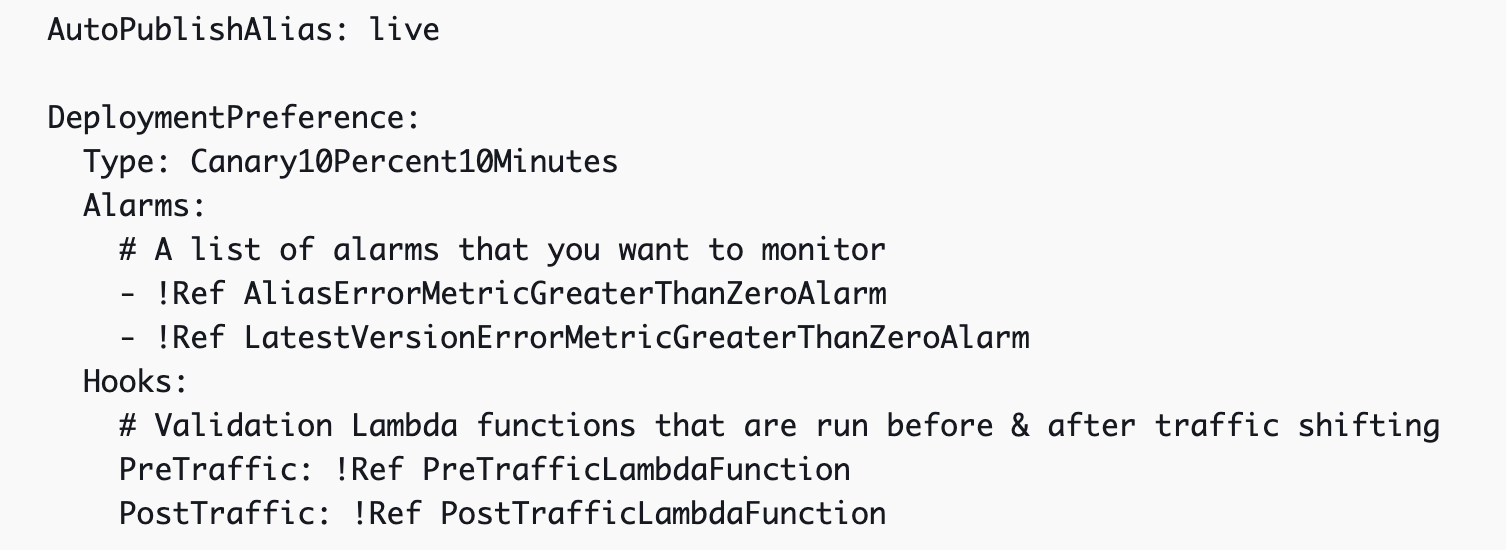

You can define how you prefer your deployment to happen by specifying a DeploymentPreference.

That way you can define how you want traffic to shift and which validations need to happen

More info can be found here:

Infrastructure as Code deep dive

Making the engineer's life better!

- stability

- immutable infrastructure

Use tools to increase the quality of your IaC!

- cfn-lint: validate against cloudformation

- CFN-nag: cmd utility: force rules for IoC files

- taskcat: test by deploy

Do you already..

use cloudformation 'import existing resource' to import existing resource in a stack

use cloudformation 'import resource' to fix drift

use secrets manager or ssm parameter store to store keys, env. variables, secrets instead of hardcoding then

CDK

The AWS CDK is new for me. So my primary purpose was to see what it is and check if there would be value in using it!

Abstraction is the keyword here for me.

CDK let's you define Infrastructure as Code, REAL code.

You can use Java, Python, C3 and Typescript to define your infrastructure.

So no more writing yaml or json.

- use python, javascript, java to create your infrastructure

CDK often allows you to reduce the number of lines in your IaC template by a magnitude of 10.

Of course to do so, they take abstractions. And to abstract you have to make opinions. So the higher the abstraction, the less code you have to right, the more opinionated your setup is.

Since it is REAL code now, you can easily write unit tests to test the definitions of your infrastructure.

Cloudformation registry

Cloudformation registry can be used to manage third party resources in the same way as native AWS resource provider.

End-to-End Observability to Better Understand Your Serverless Apps

I sincerely advice anyone who uses Faas on AWS to rewatch this talk!

I know first hand that observing a Faas system in production requires knowing what your doing (and why you're doing it) when setting up the tools to observe it.

Danilo created a sample app that he used during the demos and which can be found here

What is observability

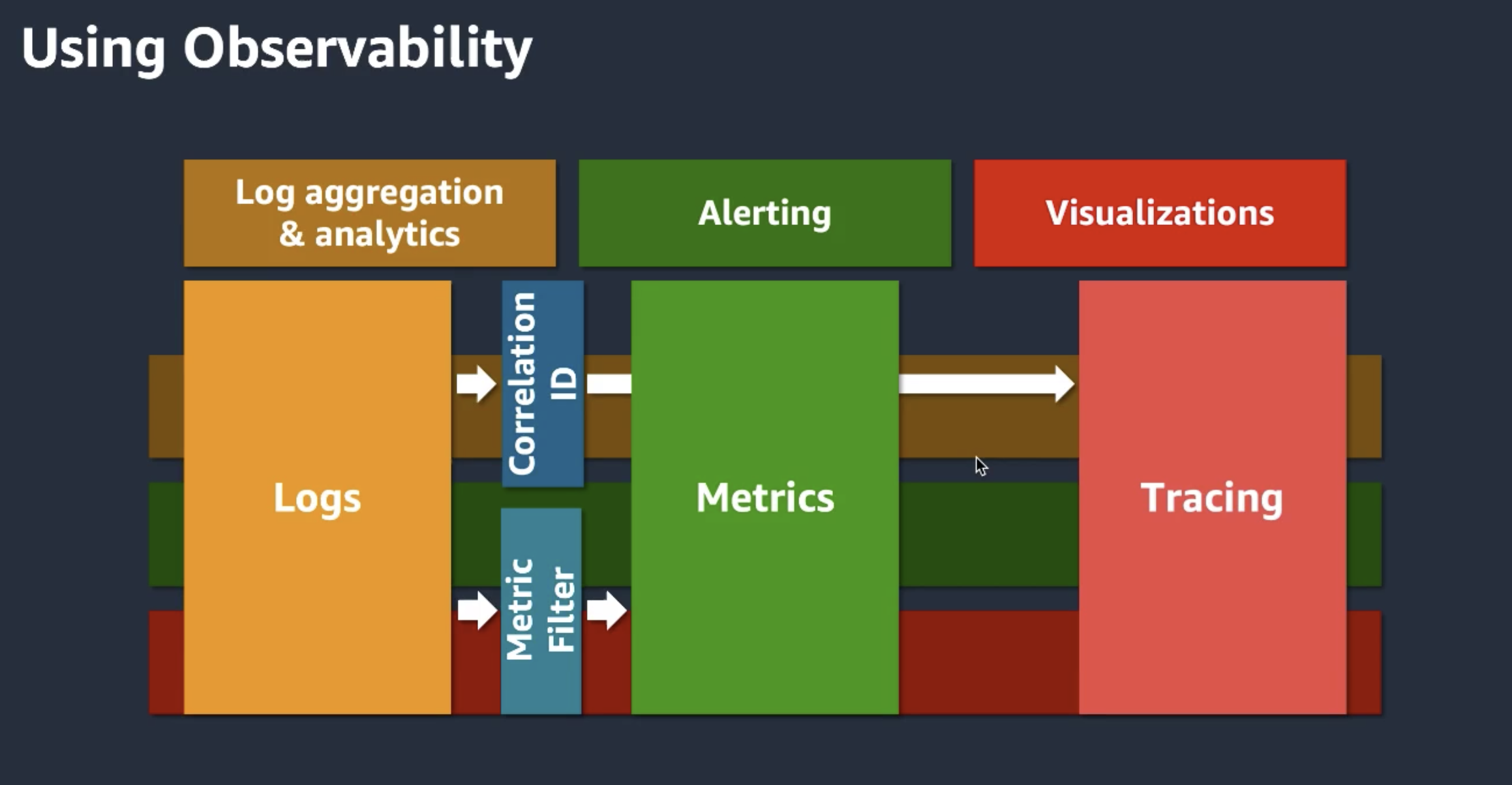

3 pillars: logging - metrics - tracing

Measure of how well you can understand the state of a system from outside

Handing us the right tools

Danilo started giving away a lot of awesome tips, tricks and tools. I summarize them here:

- Measure both technical and business metrics!

-

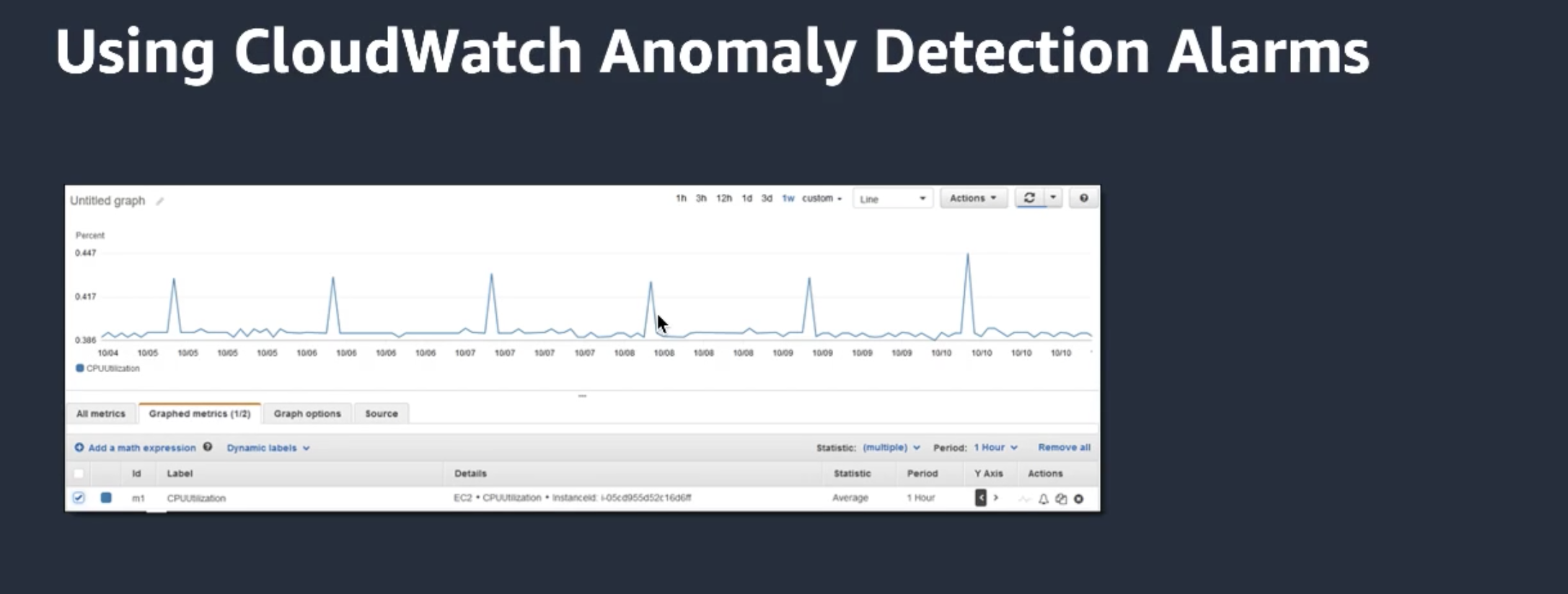

CloudWatch anamaly detection! Looks at the last 14 days (!but you can exclude a certain period of time if necessary)

- Set up alarms for anomalies or for deploys!

- Use Cloudwatch composite alarms to group alarms.

- Cloudwatch log insights! Very useful, query multiple loggroups.

-

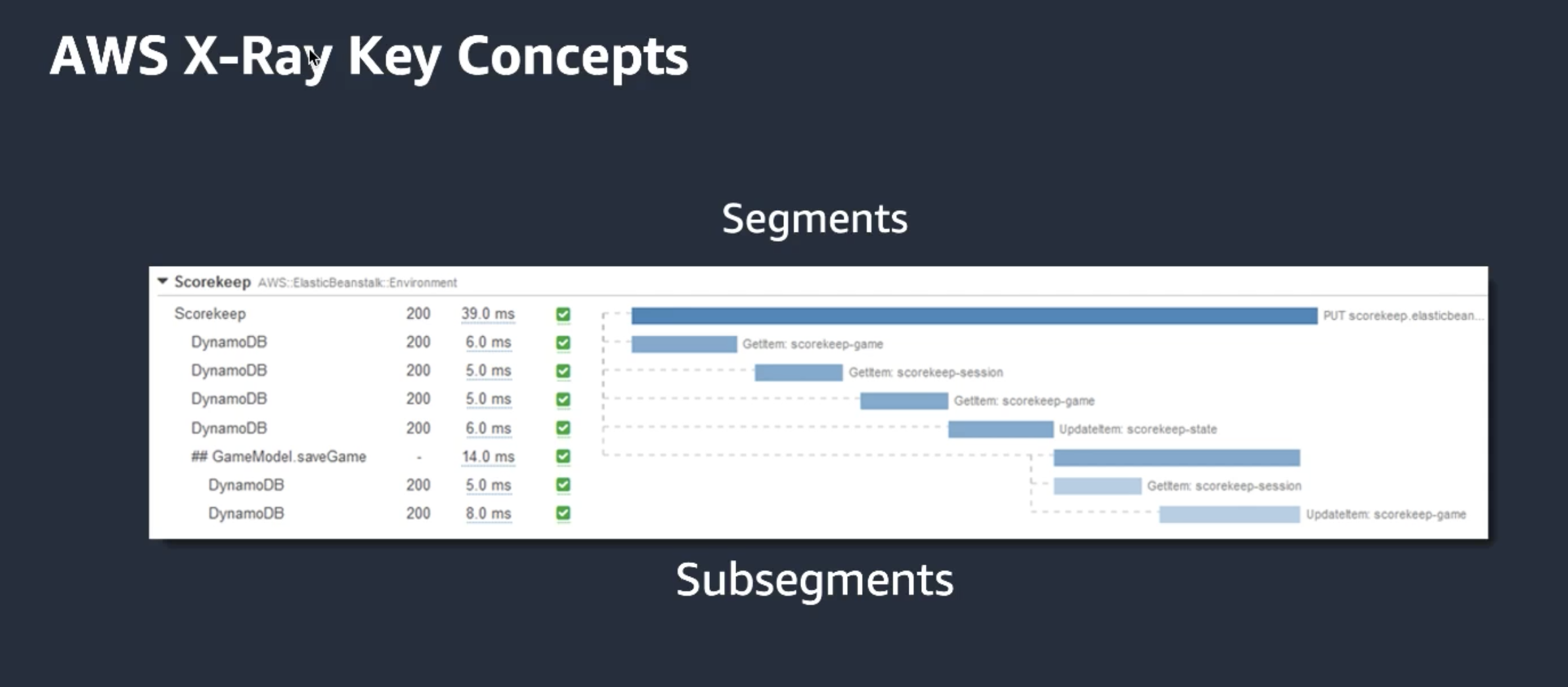

XRAY: trace your requests through your landscape. Wrap your sdk to trace calls. Wrap your http client if you also want to trace these calls.

-

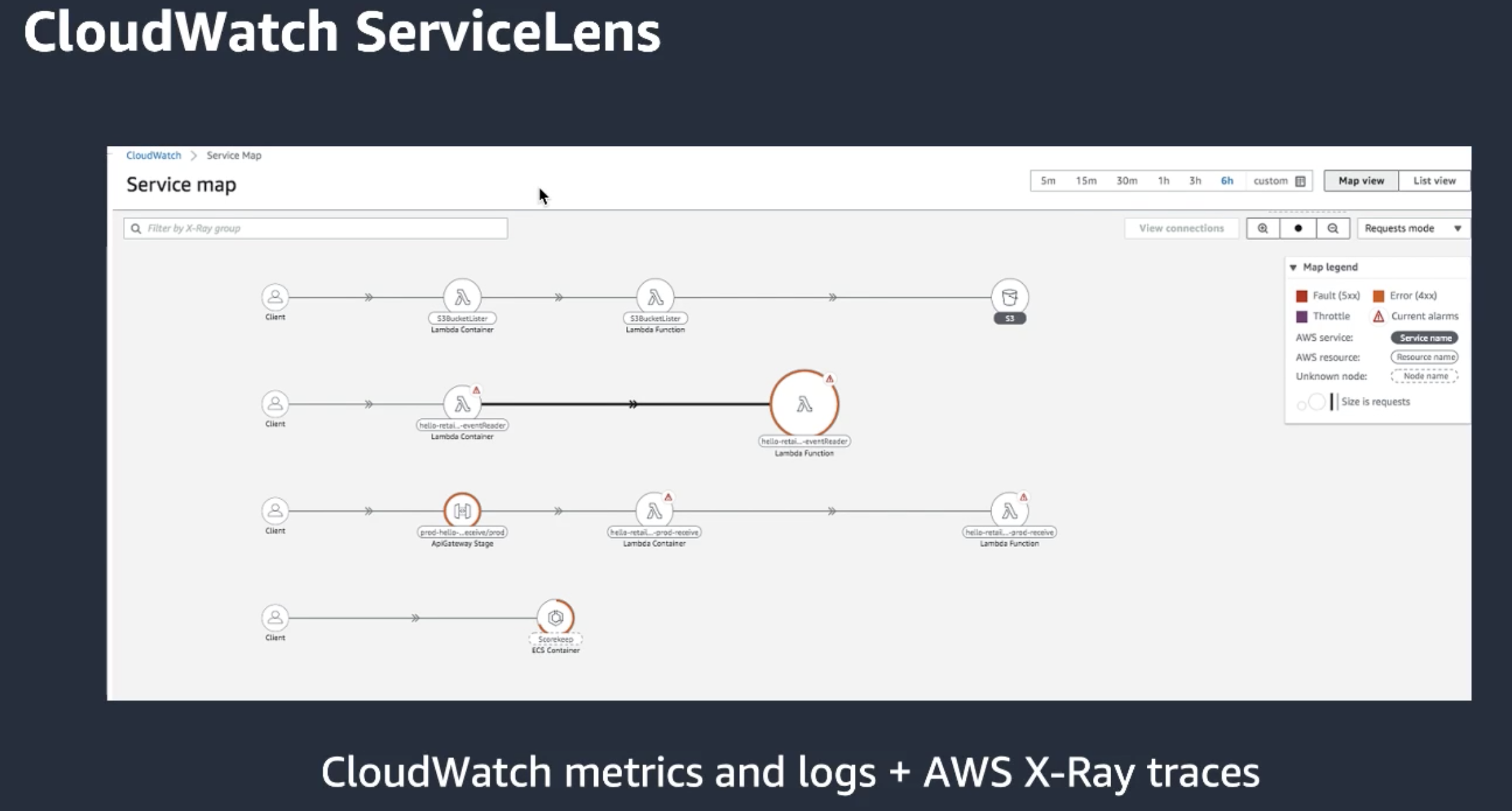

ServiceLens: combine Cloudwatch and Xray. Correlate the information from xray with the info in Cloudwatch

-

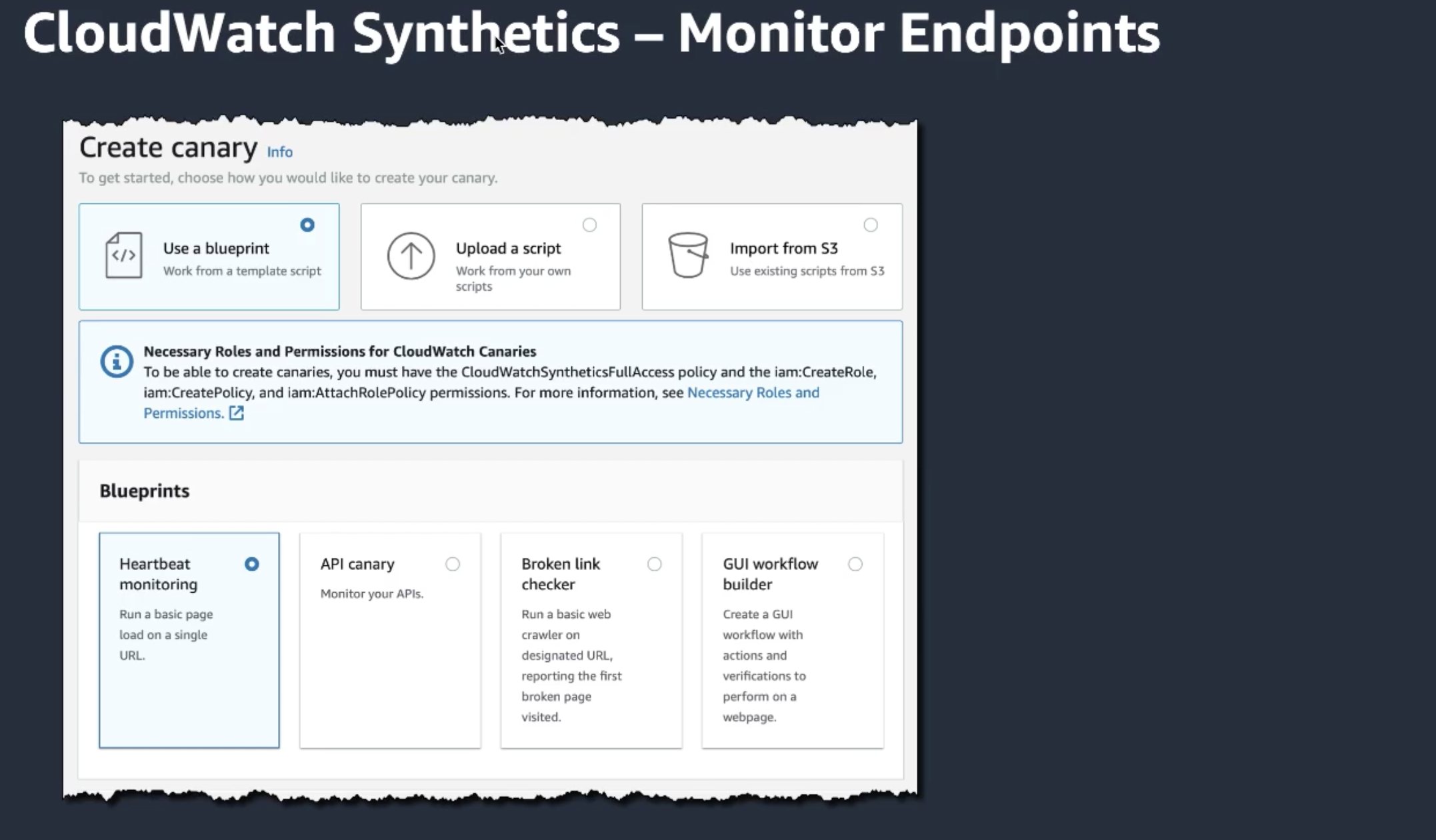

Synthetics: monitor the health of your own and external api's

-

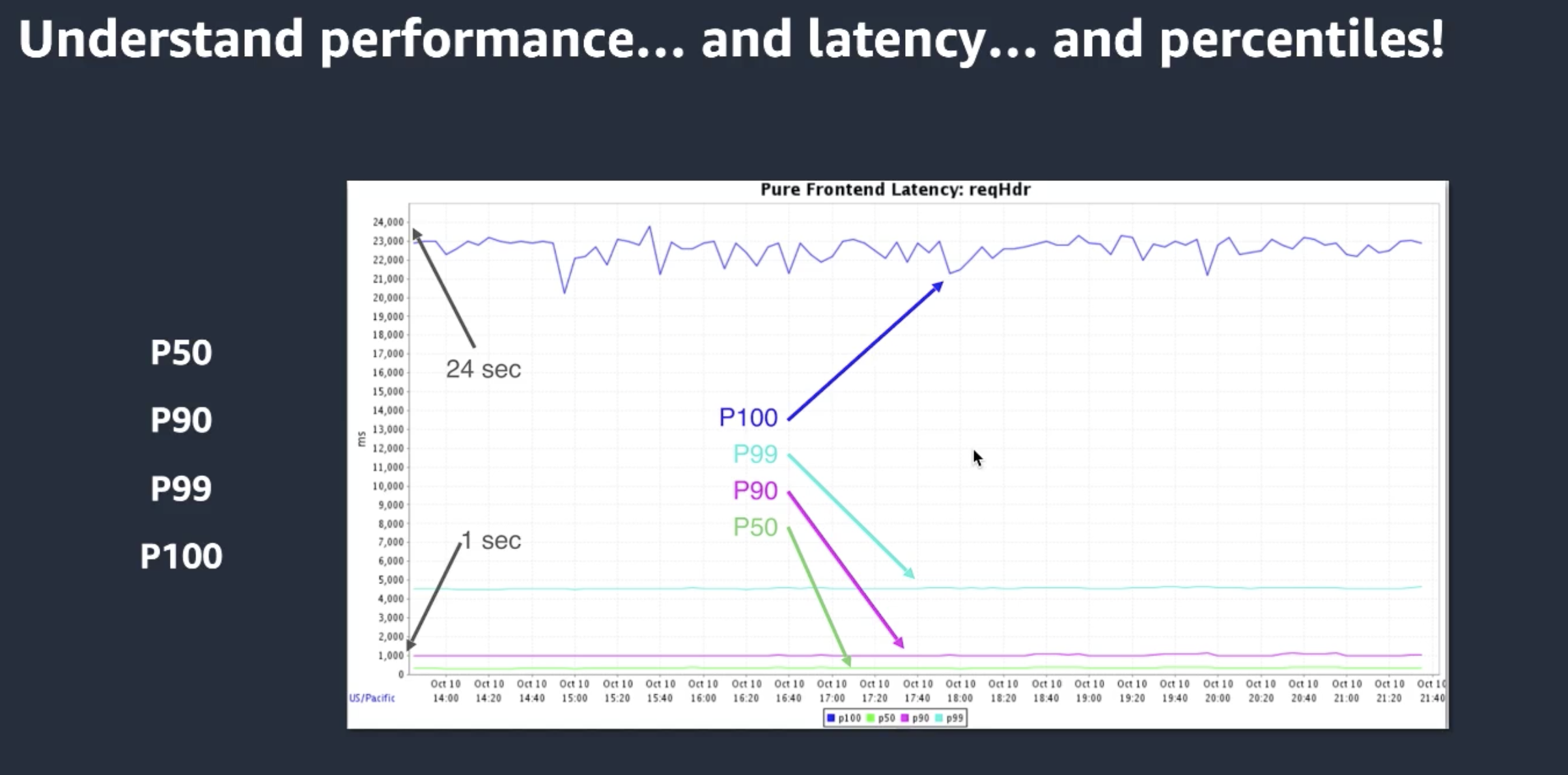

Use percentiles to visualize latency

Giveaways

At the end Danilo had a couple nice giveaways:

- Lambda now has the concurrent exection metric for all Lambdas, not only those with a reserved concurrency

- Athena allows you to query your structured logs with SQL